AI Is Not Neutral—It Shapes Human Lives

AI is already influencing decisions that affect health, freedom, and wellbeing.

As Wagner explains:

“When something impacts our lives, our wellbeing, our freedom—ethical issues arise.”

From diagnosing diseases to shaping what information we see, AI systems are not just tools—they are actors in human systems.

And that means ethics is no longer optional.

The Promise—and the Risk—of AI in Healthcare

One of the clearest examples comes from healthcare.

AI can act as a “second reader” in radiology—analyzing scans alongside doctors to detect tumors or abnormalities faster and at scale. This is especially critical given the global shortage of radiologists.

“We have a shortage of human labor… and AIs are very good at visual pattern recognition.”

But here’s the tension:

- AI can improve accuracy and speed

- Yet it can also introduce new risks—from hallucinations to blind trust

And that tension exists across every sector—not just healthcare.

The 5 Ethical Risks You Cannot Ignore

Wagner highlights several ethical fault lines that are already shaping our AI future:

1. The Black Box Problem (Lack of Transparency)

AI often produces results without explaining how it got there.

“The doctor doesn’t know why… and that becomes a big ethical issue.”

👉 If we don’t understand decisions, we cannot trust or challenge them.

2. Bias Is Built Into the System

AI learns from historical data—which is never neutral.

- Gender bias

- Ethnic bias

- Cultural bias (e.g., Western-centric models)

👉 If unchecked, AI doesn’t remove inequality—it scales it.

3. Manipulation at Scale

AI can predict behavior—and influence it.

From targeted ads to political messaging, the ethical risk is clear:

👉 Who controls the system controls influence.

4. Over-Reliance on AI (Loss of Critical Thinking)

Humans tend to defer to AI—even when it’s wrong.

“You can’t turn your brain off… you have to be very critical.”

👉 The danger isn’t just bad AI—it’s blind trust in AI.

5. Responsibility Becomes Blurred

When AI makes a mistake—who is accountable?

- The developer?

- The user?

- The institution?

👉 Ethics and liability are no longer aligned with traditional systems.

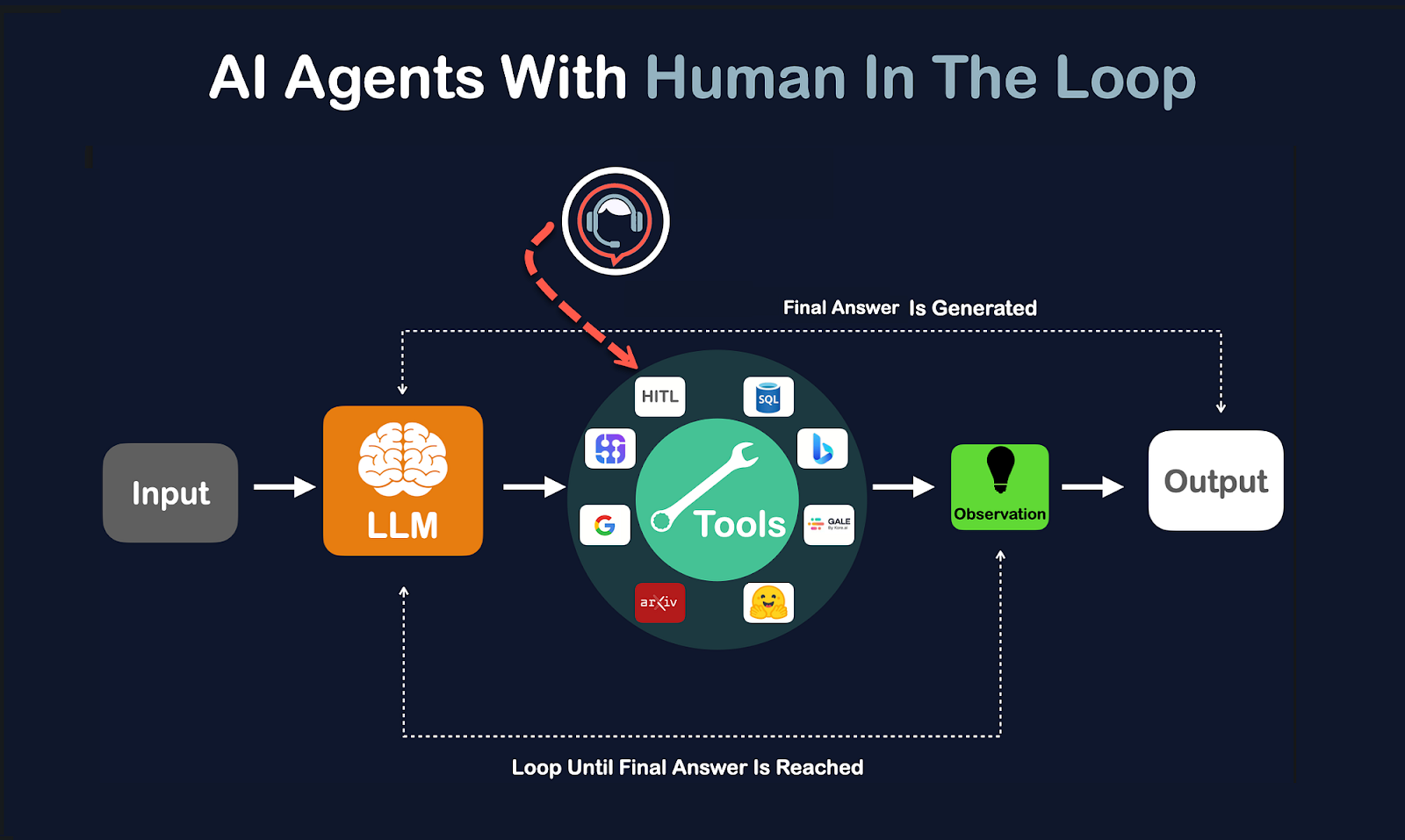

The Most Important Principle: Human + AI, Not Human vs AI

One of the strongest conclusions from the conversation is this:

The future is not AI replacing humans—it’s AI collaborating with humans.

“As long as humans and AI have different strengths and weaknesses, we should keep the human in the loop.”

Examples:

- AI detects patterns → Humans apply context

- AI scales decisions → Humans ensure meaning and ethics

- AI is fast → Humans are accountable

👉 Ethical AI is not autonomous—it is co-created.

What You Should Start Doing Today (Practical Actions)

This is where the conversation becomes actionable.

Here are immediate steps anyone can implement:

1. Ask the Ethical Question—Every Time

When using AI, don’t just ask “Is this correct?”

Ask:

- Is this ethical?

- Who does this impact?

- Who might be harmed?

👉 Add an “ethics lens” to every AI output.

2. Use AI to Challenge Your Thinking—not Replace It

A powerful technique:

- Ask AI for an answer

- Then ask:

- “What am I missing?”

- “Who could be negatively affected?”

- “What would an ethicist say?”

👉 Turn AI into a thinking partner, not an authority.

3. Train Your Critical Thinking (AI Literacy)

Wagner suggests a simple but powerful method:

Test AI on topics you already understand.

👉 This helps you:

- Spot errors

- Understand limitations

- Build intuition about reliability

4. Protect Yourself from AI-Driven Manipulation

We are entering an era of:

- Deepfakes

- Voice cloning scams

- Synthetic identities

Practical step:

👉 Create family verification systems (e.g., code words for emergencies)

5. Be Conscious of Your Data

AI runs on data—and often your data.

Ask:

- Where is my data going?

- How is it reused?

- Would I consent to its future use?

👉 Data ethics is personal ethics.

A Bigger Question: What Does a Good Life Look Like With AI?

Beyond risks and solutions, AI forces a deeper reflection:

What is the purpose of human life when machines can do most work?

Wagner points to a critical shift:

We may need to rethink what a “good life” actually means.

This is no longer theoretical.

It affects:

- Work

- Identity

- Value creation

- Society itself

👉 Ethics is not just about AI systems—it’s about the future of being human.

Final Takeaway: Ethics Is Not Abstract—It’s Personal

The most powerful insight from this conversation is simple:

“Ethical issues with AI will affect you directly.”

This is not a debate for philosophers or policymakers alone.

It is:

- About your decisions

- Your data

- Your reality

The Bottom Line

AI will bring extraordinary value.

But only if we actively shape it.

The goal is not perfection.

The goal is responsible progress.

As Wagner puts it:

“We introduce innovation… then we solve the problems it creates.”

Call to Action (Life With Artificials)

At Life With Artificials, we believe this is the defining moment:

👉 To design systems that are not only intelligent—but ethical, human-centered, and future-proof

👉 To bring citizens, policymakers, and innovators into the same conversation

👉 To ensure that AI serves humanity—not the other way around

If you’d like, I can also:

- Turn this into a LinkedIn + SoMe content series

- Add SEO optimization + keywords

- Or adapt it into a YouTube article description + blog hybrid for your channel 🚀

.jpeg.jpg)